How do we know what we think we know about strength & fitness?

A bird's eye view of epistemology in the strength coaching/fitness fields

Although there are a few distinctly different approaches to epistemology in the fitness field, I rarely see them explicitly discussed. They’re usually just assumed in the premises of any given article or approach of a particular coach, company, or institution, and the rest of the article or training philosophy proceeds from there. Occasionally an approach is critiqued, but as you might expect, it’s often straw-manned in order to do so. I’ve yet to see them reviewed in a meta fashion that compares and contrasts them, and tries to explain them in a way that each of their respective adherents would more or less agree is accurate.

This article is my attempt to do that, albeit in a generalized - thus simplified - format. An overview piece like this can’t capture every shade of grey, so I won’t attempt to do so. It’s meant to be a broad summary, not a deep dive, but given that constraint, my goal is to describe the two basic approaches I’ve seen in a way that an adherent would find at least mostly satisfactory, but then critique them, and come up with my take on the best practice currently available based on what we know right now.

Bro Science: The Granddaddy of em all

Organized fitness as an independent, competitive endeavor for its own sake, is fairly young, and like many sports, a lot of the developments within it came from copying and/or trying to improve upon what the best people were doing using guess and check or A/B style testing on yourself or a small group of acolytes.

The attraction of this approach is obvious - if the biggest or strongest guy is doing it, it must be pretty good, right? Who are you to question what someone twice your size is doing, after all. The proof is in the pudding. End of story.

To this day, some of the most common retorts thrown about in the strength-sports world are, “Oh ya, how much do YOU lift?!” or “If so-and-so’s method is so great, why don’t I see him in the warmup room coaching a dozen elite athletes at nationals?!” Superficially compelling, and perhaps not even entirely without merit - theory must work in the real world to be worthwhile - but still extremely simplistic and uncompelling to anyone not already invested in a different system.

While fealty to bro science has been surprisingly resilient and still comprises a large proportion of how people are influenced to train, an analytical mind quickly finds some flaws.

The first, big glaring one is that it’s essentially a big appeal to authority. Granted it’s based on some empirical evidence too - we’re following whoever gets the best results - but that evidence is hopelessly confounded. How do we know that the biggest or strongest guy couldn’t even be bigger or stronger if he took a different approach? How do we disentangle variables like genetic potential, effort, consistency, the use of performance enhancers, etc? If we followed this idea to its logical conclusions, we’d still be training like Eugen Sandow did over 100 years ago.

A frequent counter-example against the “Oh ya, well how many champions have YOU coached?!” line, is the development of the Fosbury Flop in the high jump. Fosbury was mocked and ridiculed for his silly looking technique, and of course told that no champion or gold medalist had ever done it that way. And that was true, but his method was still superior, which he proved by going out and winning a gold medal with it in 1968.

And yet, it didn’t come from sports scientists, and Fosbury was warned against it by some coaches who felt it wouldn’t work, and that he’d probably get hurt. But the older methods of high-jumping hadn’t been working well for him, so he experimented. Fosbury wasn’t thinking about the physics; he was just searching for something that felt like it would allow him to more easily get his butt away from the bar. Clearly, he hit on something that worked for him. And then for everybody.

A gold medal was enough for other jumpers to notice, and beginning in the 70’s, the world record holders consistently used the flop instead of the old western roll and straddle techniques. But let’s do a little thought experiment. Imagine if it wasn’t Fosbury who figured out this technique, but little old me, your humble author. I’m a 6’ 275 lb powerlifter who is well past my explosive peak and can’t jump nearly as high as I could when I was 16 and casually grabbing 10’ basketball rims with no running start, to show off. If I just discovered it today and had to prove that the Fosbury flop was superior by my own performance, it would never get anywhere, and I’d be laughed out of the room. It took the lucky, fortunate coincidence of someone whose baseline performance was close enough, that the small additional benefit of the flop made the difference.

The analogy to strength and muscle building is clear. If a genetically average guy comes up with a superior method, many people won’t believe him simply because “How much do you squat? 525? LOL that’s not even a warmup at nationals!” Meanwhile, if he had used their method, he’d have been stuck at 475 forever. The same is true even if you’re a coach. Unless you’re fortunate enough to get the genetic cream of the crop in your athlete pool, coming up with a method that’s 2 or 5% better won’t allow you to prove its superiority in the bro science method, simply because your athletes are at a 40% genetic (and/or performance enhancing compound) disadvantage, and your 5% better training method can’t overcome that deficit. The other guys will still be bigger and stronger, and everyone whose evaluation starts and ends solely based on end results, rather than cause and effect, will never be open to the possibility that you’re right.

Another shortcoming of bro science is that, especially in the early days, you were limited to knowing what the biggest guy at your own gym was doing, and often the local biggest guys’ approaches differed from each other. Then the muscle magazine era launched and dominated for several decades, so we were able to get insights from the best lifters in the country. We could find out what lifters over at York Barbell were doing, or Bill Pearl or Reg Park. Later we got snapshots into the training of Arnold and Lou Ferrigno, Dorian Yates and Flex Wheeler.

But the problem persisted because these guys, too, often had their own approach that differed one from the other. Yates, for example, is known for his super high intensity, heavy, short duration workouts, while Arnold is known to have crushed tons of volume using a variety of different exercises over a long workout. Similarly in powerlifting, Westside Barbell had a distinctly different approach compared to the legends of the 80s & 90s like Ed Coan and Kirk Karwoski. How should an interested beginner decide what do do?

The internet era of podcasts, Instragram, and YouTube videos gave us even more detailed insight into even more top level lifters, along with plenty of pros, aspiring pros, and high level amateurs - straight from them, instead of edited or gatekept by a magazine or filmmaker. But it didn’t solve this problem at all, because now we could see that hundreds or even thousands of high level people getting good results, use an even wider array of strategies and tactics. Which is best? Is there even a best?

So while the basic approach of doing what works for your local biggest/strongest people in the gym has great appeal and has worked for many, it does have some shortcomings as well.

The reason we’re not all still training like Sandow, is because some people have enough cojones to ignore the exhortations to just do what the biggest guy does and the mocking comments about knowing better than the champion, and experiment anyway, tinkering around based on some combination of experience and guesswork. Usually the testing is conducted on the subject himself, and then if that person becomes prominent, is declared the new thing you have to do or else you’re dumb. Sometimes it’s also A/B tested on some of that lifter’s followers or clients, but almost never in anything approaching a scientific manner.

This has on the one hand, obviously led to successful development of some new and improved techniques over time, but on the other hand, still lacks a rigorous mechanism for teasing out cause and effect, for filtering what actually works broadly from what happened to work because that person is a genetic freak or is taking a boatload of PEDs, or just outworks everyone else.

Exercise Science: A Response to Bro Science

Physical culture and exercise were part of many societies dating back to antiquity. The 2500 year old story about Milo of Croton carrying a newborn calf on his back every day and continuing to carry it daily as it grew until he was carrying a full grown cow, was an early example of what we now call progressive overload. Unlike modern organized fitness competitions of various sorts, Milo was training for olympic events, not for the fitness itself, but he enjoyed demonstrating his strength in various exhibitionist ways that would later return to popularity especially in 19th and 20th century North America.

Despite some very early antecedents, the modern version of exercise science is only about 100 years old. The first exercise physiology textbook I’m aware of, Exercise in Education and Medicine by Dr. R. Tait McKenzie, was published in 1910. The Journal of Applied Physiology appeared in 1948, and 75 years later is joined by a bevy of other peer reviewed journals that publish research in the field.

Whether a conscious response to the blossoming physical culturists of the day or not, it served as an intellectual response of sorts. Instead of copying from or doing very small scale self-experimentation to improve on what the strongest people happened to be doing, we would now apply the modern tools of science to the field of fitness.

The result today is a massive and growing pile of peer reviewed articles in many journals, and numerous degree programs in exercise science and exercise physiology, with master’s and PhD programs also churning out more graduates who contribute to the academic literature in the subject.

With the many triumphs of science in the modern era like the automobile, the airplane, quantum mechanics’ evolution from classical physics, the nuclear bomb and power, radio and phones and televisions, antibiotics and more - any discipline that could attach itself onto the respect and legitimacy of the umbrella of “science” could receive both research funding and respect from the general population.

But one thing I can’t help but notice is that most of those great advancements of science that gave it such a huge bump in widespread respect and acceptance didn’t come from academic departments or peer review, but by individual rogue tinkerers and engineers, scientists or inventors working outside the mainstream of their fields and often at odds with it. From Gregor Mendel to Darwin, Einstein to Alexander Fleming, Ignaz Semmelweis to Barry Marshall - the gatekeeping and process of peer review was not what led to the great scientific advancements of the modern era, but the ingenuity and use of the scientific method by mavericks working outside the accepted consensus of their time.

Be that as it may, exercise science began to be a modern academic field in earnest and continues to grow and expand to this day.

The approach taken by this wing can be summed up as: “Citation needed.”

When making a claim about fitness, an appeal to the strongest guy in the gym or a successful group of lifters or athletes is not only insufficient to this group, but often actively mocked and frowned upon. If you can’t source your claim to a peer reviewed journal, it’s considered unsupported by the evidence and can be summarily rejected, especially and even more so, if a contrary claim can be cited from a peer reviewed journal.

Issues with the First Two Methods

In case my bias wasn’t already apparent, I don’t find either of the two prior approaches compelling. As someone who started lifting as a high school senior and got some good initial results by copying what the strongest guys at my tiny little community center gym did, and then reading articles in Flex Magazine, I can appreciate some aspects of bro science. As a holder of a degree in biology and big fan of the scientific method, I appreciate some aspects of the exercise science approach. But as complete sense-making philosophies of how to approach fitness, I find both lacking.

Bro science can get things right, but the corrective mechanisms of teasing out cause and effect vs any huge number of possible confounding variables is too weak.

Exercise science is troubled by some of the same issues that led to the following quotes about evidence based medicine:

It is simply no longer possible to believe much of the clinical research that is published, or to rely on the judgment of trusted physicians or authoritative medical guidelines. I take no pleasure in this conclusion, which I reached slowly and reluctantly over my two decades as editor of The New England Journal of Medicine. - Marcia Angell, 2009

The case against science is straightforward: much of the scientific literature, perhaps half, may simply be untrue. Afflicted by studies with small sample sizes, tiny effects, invalid exploratory analyses, and flagrant conflicts of interest, together with an obsession for pursuing fashionable trends of dubious importance, science has taken a turn towards darkness.” - Richard Horton, editor-in-chief of The Lancet, 2015

“If peer review was a drug it would never get on the market because we have lots of evidence of its adverse effects and don't have evidence of its benefit.” - Dr. Richard Smith, editor of the British Medical Journal, 2015

What, then, should we think about researchers who use the wrong techniques (either wilfully or in ignorance), use the right techniques wrongly, misinterpret their results, report their results selectively, cite the literature selectively, and draw unjustified conclusions? We should be appalled. Yet numerous studies of the medical literature, in both general and specialist journals, have shown that all of the above phenomena are common. This is surely a scandal.

When I tell friends outside medicine that many papers published in medical journals are misleading because of methodological weaknesses they are rightly shocked. Huge sums of money are spent annually on research that is seriously flawed through the use of inappropriate designs, unrepresentative samples, small samples, incorrect methods of analysis, and faulty interpretation. - Douglas Altman, Chief Statistical Advisor to the British Medical Journal, 1994

Exercise science is its own discipline, yes, and not every problem of evidence based medicine, in whose context the above quotes are pulled, is applicable. The influence of the pharmaceutical industry in funding research, regulatory approval, and the universities and journals themselves, for example, isn’t as much of an issue when researching strength training. But many issues are the same. Groupthink, the tendency to ideologically gatekeep, the vested interests and egos of editors, researchers, and peer reviewers to view unfavorably ideas that undermine their own careers or reputations, and every other human issue are surely present.

The replication crisis is more widely acknowledged than ever, and while a lack of reproducibility is an inherent part of the scientific method, and one part of the process by which we eventually discover and learn more over time, that process is stunted if we treat published, peer reviewed articles as both automatically true until proven false (only by another peer reviewed paper), and the only acceptable evidence for what’s true at all.

Further, science itself is under assault by ideology:

https://twitter.com/wolfstrength/status/1654531333752750080?s=20

Though this started in the social sciences, its underlying ethos has now spread to STEM. It’s simply no longer safe to assume that the unbiased pursuit of truth, wherever it takes us, is the foundational ideology of the people doing science. Fealty to the scientific method can take a backseat to ideology, and if the conclusions that the scientific method reaches are unacceptable, then they just won’t be funded, won’t be published, will be retracted, etc.

A few examples. First, from Dr. Flavio Cadegiani, a board certified endocrinologist who also has a PhD in clinical endocrinology.

https://twitter.com/FlavioCadegiani/status/1520740121003573248?s=20

Next is from biologist Colin Wright, reporting on the ideological gatekeeping, intellectual inconsistency, and putting moral concerns laden with unproven assumptions ahead of the unbiased pursuit of truth and allowing the scientific method and discourse to play out:

Anatomy of a Scientific Scandal

Here’s another recent good example from the covid era: Masking young children was a very contentious policy that persisted in much of the US well into 2022, and still exists here and there. Even though it was eschewed in most of Europe and the rest of the world, it was treated as self evident science here in North America to the point that peer reviewers refused to allow publication of papers that argued to the contrary. Even though the very thing at issue was whether it was good science! This is classic ‘begging the question’ - assuming your conclusion in the premises, and proceeding from there as if it’s Absolute Truth.

Of course, the very issue the research was investigating was whether masking young children was efficacious, and even if it was, if there were downsides that might outweigh the benefits. But ideological gatekeeping based on question-begging and moralizing led to supposed “scientists” not even allowing the paper to be published at all. Not allowing the scientific method and process to play out, the weighing of evidence and analysis to determine the truth. Here we have people who “Trust The Science” actually bragging about how the sorting process of what gets published at all, to make it into The Literature that they venerate and worship, can be filtered ideologically just as much as for quality.

You could argue that these topics - covid treatment, masking, and the medical treatment of gender confusion in minors - are inherently more political in nature, and thus subject to ideological gatekeeping more than exercise science. There is some truth to that. But that doesn’t mean there’s no room for financial incentive, ego, career and reputation protection, and yes, even training ideology, to lead to gatekeeping of funding and publishing research for the same reasons, albeit perhaps less hotly contested in the front pages of public knowledge.

Applied Insights from Economics

The insights of James Buchanan, who won the Nobel Prize for his work on Public Choice Economics, are useful here too.

Buchanan overturned the longstanding foundational assumption of political science, that political actors are well intentioned, on its head. “Politics without romance,” as he called it, referring to the fact that human nature exists, and the people who occupy political positions and institutions are no less human than the ones in the private sector. The same motivations of greed, self interest, and all the rest animate them just as much and just as easily as the rest of us. Given this key insight, it’s extremely safe to say that this issue of ideological and other biases and motivations aren’t limited to hotly contested political topics, but indicative of a pattern in human nature. We can assume the same is at least sometimes true not just for what gets published, but also for what research gets funded in the first place, in areas even outside of hotly contested political debate.

The scientific method is a value-neutral pursuit of truth, but scientists are not. They are people just like the rest of us, and as long as there are human beings doing the gatekeeping, biases or agendas of financial interest, ego, ideology, and others will be a factor. We can’t attribute infallibility to a system that is set up to fail at these points.

For example, here’s some recent patently silly research, published and disseminated in the media:

Sam Krapf, owner of Ground Zero Strength in Twin Falls, ID, has a master’s degree in the field, and chipped in earlier today with this excellent anecdote that explains how supposed experts in exercise science can fail at the most essential task of understanding the the very basic fundamentals of their own field:

https://twitter.com/sam_gzstrength/status/1680246270886301696?s=20

Mark Rippetoe wrote a good article on this for T-Nation nearly a decade ago, highlighting some absurd papers that made it through peer review and were published: "The Problem with “Exercise Science.”

I myself highlighted a paper for analysis just a couple months ago, breaking it down and showing how you shouldn’t conclude anything definitive from it. It’s not a terrible paper or anything, but it’s certainly too limited to draw definitive conclusions about training from. Yet it’s used by some who follow the exercise science approach to justify a whole general outlook - that using exercises that allow more weight to be lifted isn’t necessarily important, and therefore high bar or front squats can be just as good as low bar squats, for example, in training for strength.

I tweeted on this larger issue last year as well, pulling a quote from the excellent fellow SubStacker el gato malo, using harsher language than I’d use for an article like this, but the point still stands:

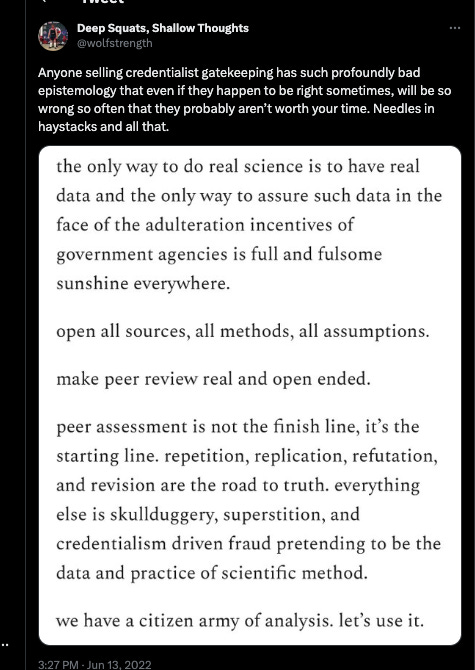

https://twitter.com/wolfstrength/status/1536445086208229376?s=20

His quote there is worth reading in full, as it summarizes and encapsulates the problems with the current model of peer review-as-science about as well as can be done in such succinct manner, and addresses the issues of gatekeeping discussed above. There’s no perfect solution, but open source peer review is the best one we have, far better than the credentialist gatekeeping currently espoused and enshrined by the exercise science crew, which has poor mechanisms to correct for all the problems identified above.

As my friend Cody said just earlier today:

https://twitter.com/AnninoCody/status/1680230891652521987?s=20

At this point, the situation looks bleak. Bro science has some merit, but obviously isn’t always correct. Exercise science can play a valuable role, but isn’t the gospel that some of its adherents treat it as. How do we know anything about this stuff??

Induction and Deduction: A Synthesis

The approach I find most compelling and most consistent with the scientific method itself, is a combination of inductive reasoning from the observations we make in the gym (a more rigorous version of bro science) and deductions we make less from pouring over peer reviewed journals, and more from the basics of well established general and human physiology, anatomy, and basic mechanics. While all areas of science are subject to revision as we learn more, these are more established and stable disciplines that have been around longer, studied more thoroughly, and used as the basis for more things that we think we know, than the specific peer reviewed exercise journals.

Take the foundational knowledge of those fields (human anatomy and physiology, mechanics), and make deductions from them. Test those deductions in the imperfect, non randomized and blinded but still valuable crucible of the actual results in the gym. With enough people, with enough variety of demographics, over a long enough time, you will notice some patterns. The stronger they hold and the longer they obtain, the more confidence you can be that you’re right, while always retaining the possibility that you’re not.

Take observations you make in the gym, whether in those informal experiments or not, and recognize patterns over time. People who do this tend to be bigger or stronger. People who do that tend to stall out quicker and stay stuck. Again with enough careful, rather than ad hoc and hasty observations over enough time, you reach some presumptive conclusions. You test those ideas both against the generalized knowledge of human anatomy and physiology and biomechanics (are they in line with or contradictory to what we know about those things?), and then once again test them in the crucible of the gym to see if the pattern holds when subjected to more people, a wider demographic, over a long period of time.

This is not a perfect approach, but it hews more closely to the scientific method than either of the above. It has better corrective mechanisms and less problem with corrupted incentives because of its open source, non gatekept nature that allows anyone to analyze and critique it with equal standing. It’s based on harder, more well established principles of science, while applying an imperfect but still better version of experimentation in the real world.

The biggest bottleneck is that it requires a coach who:

is familiar with the scientific method

is familiar with basic biology, human anatomy & physiology, and basic biomechanics

coaches a sufficient number of people for a sufficiently long time to observe results from a large number of lifters over time

cares more about results than adherence to a specific method for its own sake, but simultaneously uses sound methodology until compelling evidence amasses to the contrary, rather than just a hodgepodge of random things or no method at all

is a close, careful observer

is curious enough to ask questions, challenge both his own assumptions and those of others in the field, even the biggest names

In my nearly 20 years in this field, this type of person is by far the exception rather than the rule. But they do exist, and this is how I try to practice myself.

The most well known example of this in the field is probably Starting Strength by Mark Rippetoe. This is not to say I think Rip is correct about everything. For example, I disagree with him about trap bars, about the olympic press, about RPE, and some other things. But his work is the best known example of a basis in this kind of epistemological grounding, so even if 100% of it is disproven in the future, it will still be valuable insofar as it pushed the underlying ideas and methods of the field forward. Similar to how Newton’s classical mechanics was eventually disproven as a theory to explain everything, but still retains both a large amount of explanatory power as well as historical and methodological significance to physics and science as a whole.

Specific disagreements may be heated or significant but the overall approach and methodology is directionally the most correct, as far as I can see. It doesn’t just assume what the strongest guy does is best, but neither does it ignore the day to day observations that a keen eyed coach can make in the gym. It doesn’t just assume peer reviewed institutionalized Science is correct, but neither does it ignore the scientific method or basic foundational knowledge in the relevant fields.

It puts these things together in a way that can produce real world experiments, even if imperfect ones, and use a large number of results over a long period of time, without ideological or other gatekeeping, to continually refine itself. It allows outsiders to critique it, so that no one person or company or source of funding has a monopoly on truth and what gets out there.

The adversarial and replicative process itself is a massively important part of the refining of these methods and ideas, and this synthesis best allows those things to come to fruition. It’s frustrating not to be able to point to a single source, a single institution or guru or company and say all knowledge and info from this source can be perfectly trusted, always.

But over time, this process is what allows us to get closer and closer to the truth, and better and better at coaching regular people and athletes to success.

Excellent. It crystalized some ideas I have that were not nearly so well-formed.

Out: Bro science and science-science

In: Science Bros

Your best writing yet.