One of my favorite topics to return to time and again, is the evidence based fitness worldview and why I don’t adhere to it. Two of the three posts that have generated the most new subscriptions for this Substack thus far have been about this topic, so apparently people like reading about it, too. Hopefully that trend continues, as I’ll be taking a case study from my own training to give yet another example of the shortcomings of the evidence based approach.

To clarify, when I refer to the evidence based approach, I’m not referring to people who merely take peer reviewed exercise science literature as one piece of evidence among many, a small piece in a large puzzle that can be discarded along with your 2006 copy of Flex Magazine if it doesn’t work in the real world. Just kidding, never throw out a badass picture of Ronnie Coleman. Your Journal of Strength and Conditioning Research, on the other hand…

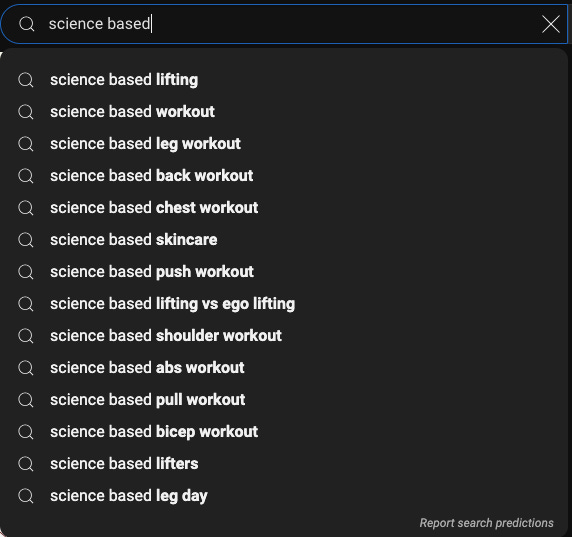

Instead I refer to a popular and seemingly growing group of people who believe that any claim not sourced to peer reviewed literature is vastly inferior at best and immediately dismissible as anecdote at worst. I just snapped this screenshot of suggested search terms after typing “science based” from YouTube just now, so you can see how popular this worldview has become:

The first result after clicking on the top term, “science based lifting,” is this video with over 4 million views, by YouTuber Jeremy Ethier, who has almost SIX AND A HALF MILLION subscribers! His website is called Built With Science, in case there was any confusion.

There are many other influencers and coaches in this evidence based maxxing space, some with degrees in the field, some even with terminal degrees such as MDs or PhDs. Suffice it to say, this way of looking at fitness and evaluating its quality is very popular right now. And it’s understandable why. The term “science” has a lot of well earned cultural cache, after bringing us theoretical understanding and practical inventions and technologies that our ancestors just a few hundred years ago might’ve considered miraculous, magical, or supernatural.

But are all things labeled “science,” created equal?

Rigorous, hard science is supposed to make falsifiable predictions, which confirm or deny the model or hypothesis upon which they’re based. If the findings or predictions of a scientific field don’t match what we see in the real world, should we ignore our own lying eyes, dismiss what we see as mere anecdote, or should we ask how rigorous this science really is? What about when we see this over and over - how long should we continue to ignore our own lying eyes?

Training Volume: A Case Study vs The Literature

First, The Literature

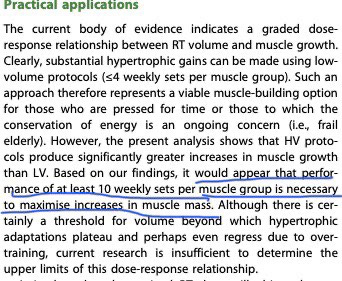

This 2017 meta-analysis has become one of the most commonly cited sources for the evidence based crew. The authors’ conclusions upon reviewing and analyzing the breadth of the peer reviewed literature is that AT LEAST 10 weekly sets per muscle group are needed to stimulate maximum growth, suggesting more is probably better up to a certain limit that we don’t know yet,

In this recent 2023 interview, one of the authors of this study reiterates 10 sets as a starting point, and suggests periodizing between 10, 15, and 20 sets per week for maximum evidence based benefit:

While the author does mention individual differences, they seem to be a secondary concern: Yes, there is some individual difference, but 10 is a really good starting point and pushing it up even more seems to help most people. I don’t think this is a biased interpretation of his words.

If we take this true, and given 4.3 weeks per month, we’re looking at 43-86 working sets per month as the recommended range for maximizing gains, with the upper limit possibly higher, but we don’t know for sure yet.

Asking Some Questions

This already doesn’t sound so rigorous to me. The difference between 10-20 sets per week, 43-86 per month is MASSIVE. Imagine doing 5 challenging sets of squats not once but twice a week, and still having to do 2-3 sets each of lunges or bulgarian split squats and knee extensions on top of that. The difference between that and 2-3 sets of squats + 2-3 sets of knee extensions twice a week is massive. This is like the difference between living in the gym, and getting in a reasonable 60-75 minute workout. But that’s the range given.

The nod towards individual differences is good here, but it reminds me of what Nobel Laureate Friedrich Hayek said about economics: “The curious task of economics is to demonstrate to men how little they know about what they imagine they can design.”

Maybe the curious task of exercise science is to demonstrate to men how little they really know about the human body, and how only broad ranges of recommendations can be made, within which there will be so much individual variation, that you simply end up having to tailor every program to the individual anyway.

That would be fine and cool if that’s how exercise science was used, but it’s often used to be very exclusionary and claim that XYZ isn’t optimal, or so and so non-evidence based strength coach is full of shit. That’s fine if you have a rigorous framework that successfully makes accurate predictions, but when your best analysis has such a huge range of possibilities, maybe the best thing to do is not denigrate everyone else’s experience as mere anecdote.

That all said, let’s get on to my case study: me.

The Case Study

In the past, I have done the recommended volume dosage as laid out above. I squatted 2-3 times per week for years, and deadlifted twice a week as well. Even absent accessories, I was doing about 10-15 sets per week of heavy work on my lower body on average. This wasn’t something I did for one quick 3 month stretch, but for years. I had decent success with this, achieving a max squat of 585.

Later on in my lifting journey, I drastically reduced my squatting volume and frequency due to a variety of reasons, some injury related, some age related, and some on a hunch that it might just work better for me. I moved to squatting either once a week, or once every week and a half, so 3-4x per month. I moved from doing higher volume sessions with some regularity to never, doing at most 3, but often 2, work sets per squat session.

Like the higher volume work above, this wasn’t something I did as a short peaking period during which the gains of the high volume could be manifested via decreasing fatigue. Not at all. I did this for somewhere between 1.5 and 2 years. The end result was 50 lbs added to my squat, from 585 to a 634 squat that was faster and easier than my prior 585 PR, and noticeably bigger legs. I emphasize the latter because, though strength and size are quite connected, at the upper echelons of specialization they aren’t exactly the same thing, and so I want to be clear that I experienced my lifetime highest leg hypertrophy during this time as well, as well as a heavier squat.

But that’s not all. During a significant portion of this period, including the main 3 month preparation phase for the competition at which I squatted the 634, I had a back tweak that effected my deadlifting so much that I couldn’t pull heavy at all. So for that period, I was doing effectively 0 deadlift sets per week. While this wasn’t the case for the entire 1.5-2 year period, it is still rather staggering.

The end result is that for the most significant chunk of training time that led me to the 634 squat - and bigger legs than I ever had before - I was averaging about 10 sets of lower body work a month, rather than the 10 per week/43 per month minimum recommended by the literature. Even when I was squatting and deadlifting both, I was still doing quite a bit less than 10 sets per week on average, but during the most crucial time, was averaging more like 2-3 sets per week.

Here’s the 585 squat, and below it the 634, which was completed more quickly, despite being 50 lbs heavier:

While I was slightly heavier when I did the 634 squat, the difference wasn’t profound. While you can’t hold literally all else equal when things are done several years apart, the only significant difference was my training protocol. Besides, the exercise science literature rarely controls for those other variables either, so I don’t see a need to hold my own case study to a standard not even met by the peer reviewed studies.

Anecdote or Data?

If you believe that the exercise science literature is the only lens through which to judge the truth of a claim, when real world results are not in line with The Literature, they are dismissed as anecdote, placebo, nocebo, or something similar. The only thing that can refute the literature is the literature. QED.

Whereas, if you are willing to look at the totality of evidence, instead of only the literature - if you insist that for the literature to be valid, it must match the real world, you might well see this as indicating an issue with the literature.

This is especially true, I think, in this case. If I had been doing 8-9 sets per week, you could say that individual differences are just slightly more than the authors credit, that the literature is broadly correct within just a slightly wider range than their claims. But I was doing only ~12-25% of the recommended sets per week! A 4-8x factor difference. This isn’t a mere case of slightly broadening the range. Anyone with training experience could tell you that the difference between 2-3 sets, 3-4x a month and 20 sets a week may as well be different planets.

Yes, this is only one case. But if the science was as good, as rigorous, as its most ardent proponents claim, if it can be used as a cudgel to beat those who don’t abide by it, should even one case be such a massive outlier?

Further, this matches my experience as a coach for the past ~15 years, teaching the lifts to thousands of people. Not specifically this super low volume example - I actually don’t think this low volume, low frequency squatting works for everyone, but the reason why is a topic for another day - but the overall trend of what I see in the real world in the trenches, often not matching what I see either directly in the literature, or in the claims made by its most ardent adherents.

I personally can’t see through the lens of “only the literature can rebut the literature.” To me, when it doesn’t match the real world to a sufficient degree and/or across a sufficient number of people, I go with what I see in the real world.

I certainly agree with the overall sentiment of this article, but I think the disconnect here is in understanding the type of training being done. In Schoenfeld et al., 2017 they are looking at studies with a mix of trained and untrained people of vastly different ages (this is clearly not ideal). But that aside, the training being done in the studies is mostly sets of 7-15 rep maxes and in most cases not using the compound barbell movements. So, basically the individuals are not lifting heavy and are lifting muscles in isolation. Given this I think it's perfectly reasonable that they would need more sets per week. I think this highlights what I see as the bigger problem with the "evidence-based bois" and that is their tendency to point to a "finding" of a study without looking into/understanding what the finding is actually be applicable to (or if the finding is even supported by the data).